The numbers are in, and they’re hard to ignore.

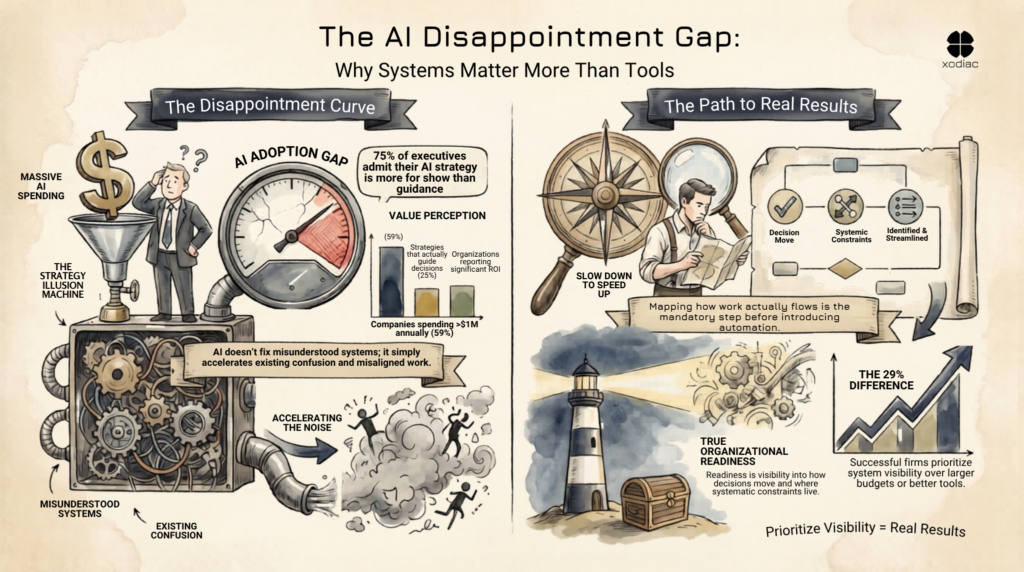

48% of enterprise leaders now describe their AI adoption as a ‘massive disappointment.’ One year ago, that figure was 34%. Investment hasn’t dropped, 59% of companies are spending over $1 million annually on AI. The technology hasn’t stopped improving. And yet results are falling further behind expectations, not catching up to them.

Something structural is going wrong. The question is what.

48% of enterprise leaders call AI adoption disappointing. The 29% seeing real returns understood their delivery system first.

The Strategy Gap No One Is Talking About

Before diagnosing the adoption failure, it’s worth sitting with one finding from recent research: 75% of executives admit their company’s AI strategy is ‘more for show’ than genuine internal guidance.

That’s a remarkable admission. It means three-quarters of the organizations currently deploying AI at scale are doing it without a strategy that actually shapes how decisions get made. The strategy exists as a document, or a slide deck, or a press release, but it isn’t connected to the real system through which work moves.

This matters because AI doesn’t operate in a vacuum. It operates inside delivery systems: the full set of processes, decisions, tools, and behaviors through which technology work moves in an organization. When the strategy doesn’t map to that system, the technology lands in a context it wasn’t designed for.

The results are predictable: low ROI, confused workflows, teams that can’t tell whether AI is helping or creating more work to manage.

Accelerating the Wrong Things

Here’s what the organizations failing at AI adoption often have in common: they deployed AI into systems that were never designed for what they’re now being asked to do. This isn’t about ignorance. Many of the people closest to these systems are acutely aware of how complex and unwieldy they’ve become. These systems often grew over time, sometimes from entirely manual beginnings, inherited by teams who’ve been managing their weight for years. AI doesn’t land in a clean environment. It lands in a system that’s already straining, and it accelerates whatever is already there.

The 29% Who Actually See Returns

This isn’t a criticism of their intentions. It reflects a pattern that’s visible across technology adoption cycles. New tools don’t fix misunderstood systems, they amplify them. If handoffs between teams are unclear, AI makes them faster and more confusing. If prioritization is misaligned across leadership, AI accelerates the production of work that nobody asked for.

AI doesn’t create organizational problems. It reveals them. The organizations discovering that AI is a ‘massive disappointment’ are, in many cases, discovering that the problems they hoped AI would solve were actually systemic constraints they hadn’t yet named.

The 29% of organizations reporting significant ROI from generative AI tend to share a different profile. Not better tools. Not bigger budgets. They understood how work actually flowed through their system before introducing AI into it, which meant they could deploy AI where it would genuinely accelerate things, rather than where it would accelerate the noise.

What ‘Organizational Readiness’ Actually Means

The phrase ‘organizational readiness’ appears constantly in the research on AI adoption. Most discussions frame it as a change management problem: do people have the right mindset? Are stakeholders bought in? Is training sufficient?

These things matter. But readiness runs deeper than culture and communication.

True organizational readiness means understanding the delivery system well enough to know where AI will help and where it will create new complexity. It means having enough visibility into how decisions are made, how work flows, and where constraints live to make an informed choice about where AI enters the picture, and what guardrails it needs when it does.

Without that visibility, organizations are deploying AI the same way they deployed the last round of enterprise software: by announcing a strategic initiative, training people on the tool, and hoping the results follow. They often don’t. Not because the tool is wrong, but because the system wasn’t ready.

The Step Most Organizations Are Skipping

The organizations in the 29% that are seeing real returns from AI didn’t skip a step. They took the time, often uncomfortable time because it requires slowing down before speeding up, to map how work actually moves through their system.

That mapping process surfaces the real constraints limiting delivery. Teams build shared understanding across leadership, the alignment AI governance actually requires. And the handoffs, decision points, and control structures where AI will actually operate become visible.

Only then does introducing AI into the system produce the results the investment is supposed to generate.

The organizations hitting the disappointment curve are investing in the tool before investing in the foundation. The fix isn’t a different tool, or a better adoption program, or a more senior AI strategy lead. It’s taking the system seriously enough to understand it before you change it.

Xodiac works with leadership teams to make their delivery systems visible, so technology investments including AI land where they’re actually supposed to. If your organization is navigating the gap between AI investment and AI results, assess whether your organization is ready to understand its delivery system today.

Most failures trace back to one root cause: deploying AI into systems you don’t fully understand. Organizations often launch AI without mapping how work actually moves through their delivery system. When AI lands in unclear workflows, it accelerates confusion instead of removing it. The technology isn’t the problem. The system it’s entering is.

Organizational readiness goes deeper than culture and training. True readiness means visibility into how decisions are made, where constraints live, and how handoffs flow between teams. If leadership can clearly answer “where will AI actually help?” and “where will it create new complexity?” you’re ready. If those answers are guesses, you’re not.

Having a strategy (on paper) is common. Executing one is rare. 75% of executives admit their AI strategy is “more for show” than genuine guidance that shapes decisions. The gap is this: your strategy doesn’t matter until it connects to how your delivery system actually works. A strategy disconnected from that reality is just a document.

It depends on organization size and complexity, but the process itself is about depth, not duration. You’re not trying to document everything. You’re surfacing real constraints, decision bottlenecks, and handoff failures that are limiting delivery. For most leadership teams, clarity emerges within weeks, not months. The uncomfortable part isn’t the timeline, it’s the honesty required to see the system as it is.

The best time is before. Bringing in clarity on your delivery system before AI enters it means you deploy strategically, not reactively. But if you’ve already deployed without that foundation, don’t assume you’re stuck. Many organizations discover systemic constraints through failed AI rollouts, then use that learning to strengthen the system. Either way, the question isn’t “consultant or not”, it’s “do we understand our system before we add more complexity to it?”

Track ROI metrics that matter to your business, but also track process metrics: Are handoffs clearer? Is decision alignment improving? Are teams doing less repetitive work? If AI is truly helping, you’ll see both. If ROI is up but teams are busier and more confused, something structural is still broken. The organizations seeing real returns from AI track both the outcomes and the system health.

It means introducing AI incrementally, with governance in place from day one. It means knowing where AI is operating, what decisions it’s influencing, and who needs to understand it. Most organizations rush to scale AI without building that foundation. Going fast safely means you slow down first to understand your system, then scale with confidence.

Diagnosis comes first. Take time to map your delivery system and understand where the real constraints are. Often, what looks like an AI problem is actually a systemic problem that AI exposed. Once you see the system clearly, the path forward becomes obvious. That’s when real change, and real ROI, becomes possible.