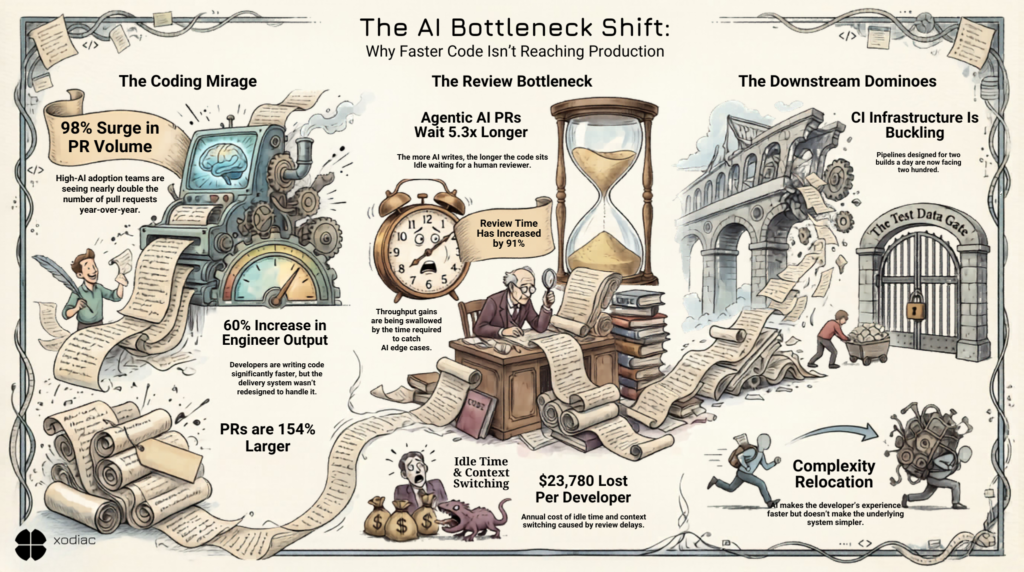

Last year, your engineering team shipped more code than ever before. Pull request volume is up roughly 98% year-over-year in high-AI-adoption teams – with median teams now merging 12.4 PRs per developer per month.

And yet, 95% of developers now spend meaningful time reviewing, testing, and correcting AI output. In the 2026 Sonar State of Code survey of 1,149 developers, code review ranked as the #1 most important skill for the AI era – cited by 47% of respondents. Not prompt engineering. Not deployment. Review.

Because AI multiplied the code. Nobody multiplied the reviewers.

The constraint didn’t disappear. It relocated.

We’ve been tracking this pattern across organizations for several weeks – and in high-AI-adoption teams, the code review layer is where it’s hitting hardest first. The tools did exactly what they were supposed to do. Developers write code faster. Output per engineer is up roughly 60% year-over-year. The problem isn’t that the tools failed.

The problem is that the delivery system wasn’t redesigned around them. Finding the real bottleneck is harder than it looks – and it rarely sits where the dashboard says it does.

Think about what AI-assisted development actually does to a delivery pipeline. A developer who used to write three pull requests a day now writes six. The PRs are larger – 154% larger, year-over-year. The code still needs to be reviewed. Someone still needs to read it, understand it, catch edge cases, and approve it for merge.

That someone has the same number of hours they had before.

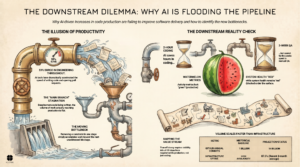

When you compress the coding tasks without redesigning the review tasks, the constraint relocates. The system gets faster in one place and backs up in another. And because the bottleneck moved rather than disappeared, it’s easy to miss. Teams see high output numbers and assume the system is working. The queue builds quietly downstream. That gap between reported progress and actual progress has a cost – and by the time it surfaces, it’s usually already a crisis.

What the AI code review data shows

PR review time is up 91% in high-AI-adoption teams. That number deserves a moment. Output nearly doubled, and the time it takes to review that output nearly doubled too. The throughput gain from AI-assisted coding is, in many teams, being absorbed by review latency.

The gap is even sharper at the individual PR level. Agentic AI PRs wait 5.3x longer for pickup than unassisted ones – and AI-assisted PRs wait 2.47x longer. The more AI writes, the longer each PR sits. And 38% of developers say reviewing AI-generated code requires more effort than reviewing human-written code – so when it finally gets picked up, it takes longer too.

The cost isn’t theoretical. Research tracking eight million pull requests calculated approximately $23,780 per developer per year lost to idle time caused by review bottlenecks – developers waiting on feedback before they can proceed, context switching to other work, then re-orienting when review finally comes through.

Multiply that across a team of twenty developers and you have a real number.

The constraint kept moving

For teams that have already solved the PR review problem – and some have, through better tooling, pairing, or restructured review ownership – there’s a next layer waiting.

We’ve seen it in financial services. A CI pipeline with a one-minute build time was not a problem when developers triggered it twice a day. AI-assisted development changed that. The same pipeline, the same infrastructure, now running two hundred times a day. One minute times two hundred is two hundred minutes of queued wait. The pipeline didn’t break. No alerts fired. But everything downstream was stalled.

The teams that ran into this didn’t see it coming because the problem was invisible from the developer side. The code was flowing. The PRs were merging. The bottleneck had moved into infrastructure that nobody had budgeted to scale – and that most developers had quietly handed back to a platform engineering team to manage.

That handoff is familiar. Developers never truly wanted to own CI infrastructure. They built enough automation to make deployment possible, then moved on. What AI has done is drastically increase the volume hitting systems that were designed for a different load. The platform team now has a fire they didn’t start and weren’t staffed to fight.

Wayne Hetherington, who leads delivery transformation work at Xodiac, points to a related problem further downstream: test data. Some organizations take weeks to prepare a valid data set for a QA environment. AI has accelerated the pace at which code reaches that gate. The gate hasn’t moved. The queue at it has grown, and of course when queues grow the work gets batched up. Large batch deployments were never a good idea and doing them 200 times a day is a nightmare.

The complexity has to exist somewhere

This is the through-line in all of it: the complexity doesn’t disappear when you add AI. It relocates.

A one-click deployment that hides all the infrastructure work behind it isn’t simple – it’s complexity that has been moved somewhere else. Your container orchestration system, your database backups, your networking layer, your secret management – it’s all still there. AI made the developer’s experience faster. It didn’t make the underlying system simpler.

The organizations figuring this out are the ones that have stopped asking “where is the bottleneck now?” and started asking “where will it go next?” That’s a different kind of visibility. It means mapping the full delivery path – not just the parts that are currently on fire – and understanding where each stage was designed for a volume that no longer describes reality.

The question to ask this week

Before the next AI tool enters your pipeline: find where your delivery system is actually stalling.

Not where the dashboard says. Where the work is actually waiting. Talk to the developers who are watching build queues. Talk to the platform engineers who are fielding the escalations. Map the real path from commit to customer. That’s exactly what value stream mapping is designed to show you – not just where things are moving, but where they’re quietly stacking up.

If you want to map your delivery system before the next tool decision, that’s exactly the work we do.

That answer will tell you what your delivery system needs – and whether more tooling is it.