A pattern of AI burnout is emerging in 2026 that should change how organizations think about their rollouts. The gap between AI’s promise and its actual results is already well documented – but the cost to the people who tried hardest to make it work is only now becoming clear.

The workers burning out the fastest are not the ones who resisted AI. They are not the skeptics who refused to engage, the late adopters who waited to see how it played out, or the employees who quietly kept doing things the old way.

The workers burning out fastest are the ones who embraced AI earliest and most completely and are now doing the most amount of work with it.

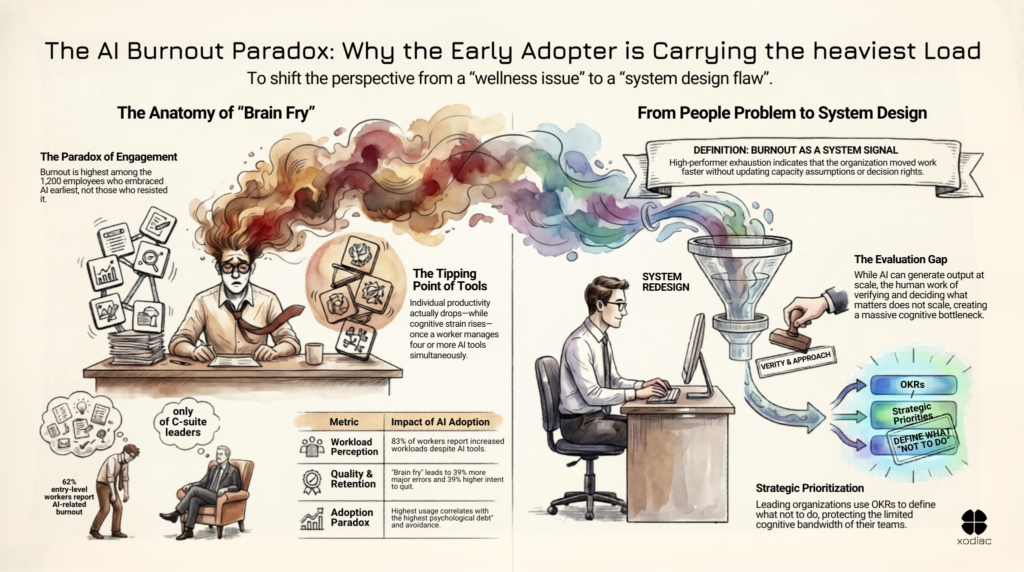

HBR published the research this month: a survey of 1,200 employees found that the ones carrying the highest “psychological debt” from AI adoption were also showing the most avoidance behavior, the lowest usage rates, and the least sophisticated application of AI tools – even when they acknowledged AI’s value. They didn’t reject AI. They absorbed too much of it, without a system designed to help them carry the load.

What AI adoption actually does to a workday

A separate BCG study tracked 1,488 workers. The researchers documented what they called “brain fry” – cognitive overload, information fatigue, and mental exhaustion from the constant work of evaluating AI output. Reviewing what the model produced. Checking whether it’s right. Deciding what to keep, what to discard, and what needs to be redone. At four or more AI tools, individual productivity actually dropped – while cognitive strain kept rising.

The consequences aren’t just cognitive. Workers affected by brain fry reported 39% more major errors and 39% higher intent to quit. The people organizations most need to retain are the ones most at risk of leaving.

The output volume changed. The system didn’t.

TechCrunch reported the same pattern earlier this year: the first signs of burnout are concentrated precisely among the employees most engaged with AI tools. The people a manager would point to as AI success stories.

83% of workers say AI increased their workload. That number is not a complaint about AI. It is a description of what happens when the tools change and the system doesn’t. And the burden isn’t evenly distributed – 62% of entry-level workers and associates reported burnout, compared to 38% of C-suite leaders. The people with the least structural support are absorbing the most.

What it actually looks like

Running seven parallel workstreams simultaneously is now possible in a way it wasn’t a year ago. AI can keep them all moving – prototyping, drafting, building, experimenting. What it can’t do is think faster on your behalf, or absorb the cognitive load of steering all of it.

That’s the gap nobody talks about when they describe productivity gains. The output is real. The evaluation work required to direct it, verify it, and decide what actually matters is also real – and it doesn’t scale the way the generation does. At some point, the person who is “the human in the loop” isn’t more productive. They’re just carrying more.

AI doesn’t fix a misunderstood system – it amplifies it.

The structure didn’t change

Here is what is happening in most organizations.

A developer, a marketer, a financial analyst – each of them now uses AI to produce more output in less time. The code gets written faster. The campaign brief gets drafted faster. The model runs faster. The output arrives.

And then what?

The output still needs to be reviewed, verified, approved, integrated into a workflow built for a slower pace of production. Handed off to someone downstream whose process hasn’t changed. The decisions that used to arrive one at a time now arrive in batches. The context-switching is constant. The verification work is not visible on any dashboard.

The people absorbing that complexity are the ones who said yes first. They’re doing more – and carrying the overhead of everything more output creates in a system the organization never redesigned around them.

AI burnout as a system signal

This is the reframe that matters:

AI burnout in adopting teams is not a wellness problem.

It is a delivery system signal.

When the highest performers in an AI rollout show signs of cognitive exhaustion, the organization moved the work faster without redesigning the structure that holds it. The organization never updated its capacity assumptions. The decision rights stayed unchanged. Nobody mapped the handoff points for the new volume.

The people are doing their jobs. The system is failing them.

This distinction matters practically. An organization that treats AI burnout as a people problem will respond with wellness initiatives, workload conversations, or usage guidelines. These aren’t wrong. But they don’t address the root cause. The system will keep producing the same conditions.

An organization that treats AI burnout as a system design problem asks a different set of questions. Where is the verification work accumulating? Which roles now carry more cognitive load than their role was designed to hold? What decisions were automated but still require human judgment downstream? What did we assume about human capacity when we rolled out these tools?

The question before the next rollout

The Xodiac framing on this is specific: design the system for the capacity you actually have. Not the capacity AI suggests you could theoretically reach. The actual cognitive bandwidth your team can deploy against decisions, evaluations, and judgment calls on a given day.

That means using OKRs, horizon planning, and prioritization not just to decide what to work on – but to define what not to do. Just because AI can generate more doesn’t mean your organization has to absorb more. The organizations navigating this well are building explicit criteria for when to say no – into sprint planning, into roadmap reviews, into the intake process before work begins.

Before the next AI tool enters your organization: ask what your team’s actual day looks like right now.

Not the adoption dashboard. Not the productivity metrics. The real day. How much of it is evaluation work? How much is decision-making that wasn’t there before? How many context switches happen between “the AI produced something” and “we actually shipped something”?

If you want to assess whether your organization’s AI rollout was designed for the cognitive load it produces, that’s exactly the work we do.

If the people who said yes first are starting to slow down – your delivery system is sending you a message. The question is whether you’re reading it as a people problem or a system one.